Kimi K2.5, the 1-trillion-parameter open-weight model that turns screenshots into code, coordinates 100 parallel agents, and outperforms leading closed models on key agentic benchmarks is now available on Nscale Inference Endpoints.

What sets Kimi K2.5 apart

Kimi K2.5 from Moonshot AI is an open-weight, natively multimodal model with several architectural choices that set it apart.

It uses a Mixture-of-Experts architecture with 1 trillion total parameters, selectively activating only a subset per request to improve efficiency. The result is frontier-level capability with efficient inference, without compromising performance on real workloads.

Unlike models where vision was added later, Kimi K2.5 was trained from the ground up on 15 trillion mixed visual and text tokens. Images and text are processed as a single unified stream rather than separate pipelines, enabling stronger cross-modal reasoning rather than simple image captioning.

Developers get direct control over model behaviour with thinking mode on Nscale, enabling deeper, multi-step reasoning when needed, with per-request control over the latency and quality trade-off.

Kimi K2.5 in practice

Kimi K2.5’s combination of native vision, long-context reasoning, and strong coding performance makes it well suited to workflows where inputs are messy, tasks are multi-step, and outputs need to be production-ready.

Three areas where it stands out below.

1. Design-to-code and visual development

What it does: Kimi K2.5 takes a screenshot or mockup and generates fully functional front-end code, including dynamic layouts, scroll animations, and component hierarchies. It also supports visual debugging directly from screenshots.

Good for: Product teams iterating on UI, agencies turning briefs into prototypes, and developers working from visual inputs rather than text.

By the numbers: 76.8% on SWE-Bench Verified, reflecting capability beyond front-end into real engineering tasks.

2. Autonomous research and information workflows

What it does: Thinking mode works through complex, multi-step research tasks, synthesizing information across sources, reasoning over long contexts, and producing structured outputs without requiring custom orchestration.

Good for: Internal knowledge tools, due diligence pipelines, competitive analysis, and large-scale content research.

By the numbers: 60.6% on BrowseComp, outperforming Claude Opus 4.5 (37.0%) and Gemini 3 Pro (37.8%) on deep research retrieval.

3. Document and knowledge work automation

What it does: Processes large, dense inputs such as contracts, financial reports, and technical documents, and produces structured outputs including reports, spreadsheets, slide decks, or data extracts. The 256K context window handles long documents without chunking.

Good for: Enterprise knowledge workflows, internal copilots, and regulated environments requiring auditable outputs.

By the numbers: 87.6% on GPQA-Diamond, indicating strong performance on complex reasoning tasks.

Performance at a glance

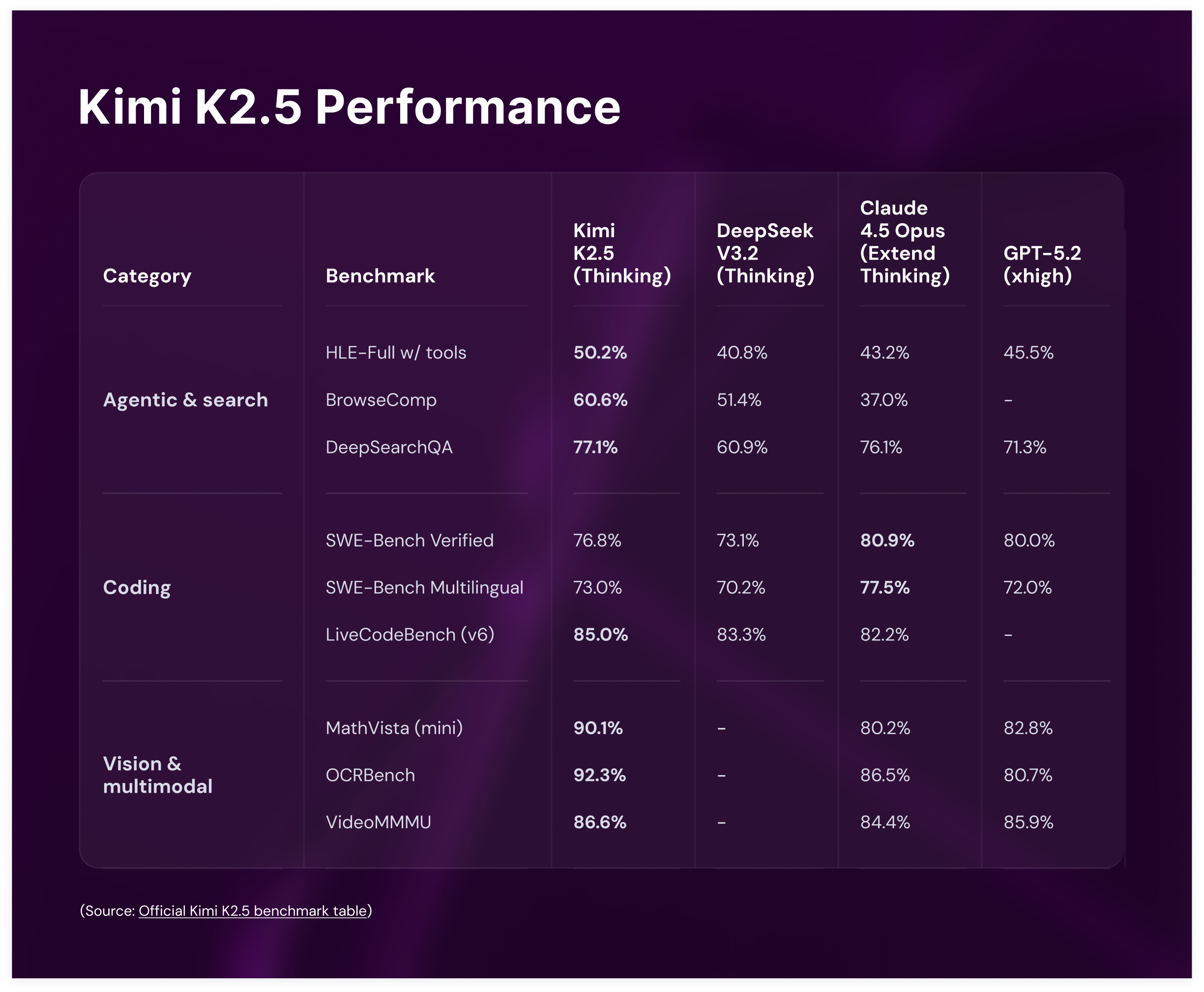

Kimi K2.5 leads on agentic and visual tasks and remains competitive on coding, delivering strong performance across real-world workloads as an open-weight model.

For developers, this translates to a model that handles multi-step, multimodal workflows reliably across a wide range of tasks.

Integrate Kimi K2.5 on Nscale

Nscale is a full-stack AI cloud built on owned infrastructure with reserved GPU capacity.

Running Kimi K2.5 on Nscale provides:

Fully managed inference. Deploy and scale workloads from prototype to production with managed endpoints, supporting both low-latency and high-throughput use cases.

Compare before committing. Nscale Prompt Workbench supports reproducible prompt engineering with versioning, fast comparisons, and direct export to inference endpoints.

Ship in minutes. No clusters, GPUs, or infrastructure to manage.

Predictable costs. Token-level pricing with real-time cost dashboards. Kimi K2.5 is priced at $0.45 input / $2.20 output per 1M tokens.

Data protection by design. Strict customer isolation with no training on request or response data.

Get started in minutes

Getting started with Kimi K2.5 on Nscale is straightforward:

- Sign up for the Nscale console

- Get $5 in free credits to start experimenting immediately

- Navigate to AI Services → Models and select Kimi K2.5

- Send your first request via the API or console

From signup to first output, the process takes minutes with no infrastructure to manage.

Or make your first text request straight from the terminal:

curl -sS "https://inference.api.nscale.com/v1/chat/completions" \

-H "Authorization: Bearer $NSCALE_SERVICE_TOKEN" \

-H "Content-Type: application/json" \

-d '{

"model": "moonshotai/Kimi-K2.5",

"messages": [

{ "role": "user", "content": "Tell me a joke." }

]

}'

Kimi K2.5 is available on Nscale Inference Endpoints now. Whether building agentic pipelines, front-end tooling, or research automation, it is ready to use.

Kimi K2.5 is one of a growing collection of models available on Nscale, alongside a selection of model variants covering GPT-OSS, Qwen, Llama, DeepSeek, Stable Diffusion, and more. From serverless inference and fine-tuning to Prompt Workbench, explore our full suite of AI Services.

.png)

.png)