Seamlessly build, tune, and run AI

Deliver advanced AI with confidence using scalable inference endpoints, controlled fine-tuning workflows, and a unified workbench for prompt engineering across teams and environments.

Move faster without compromise across the AI lifecycle

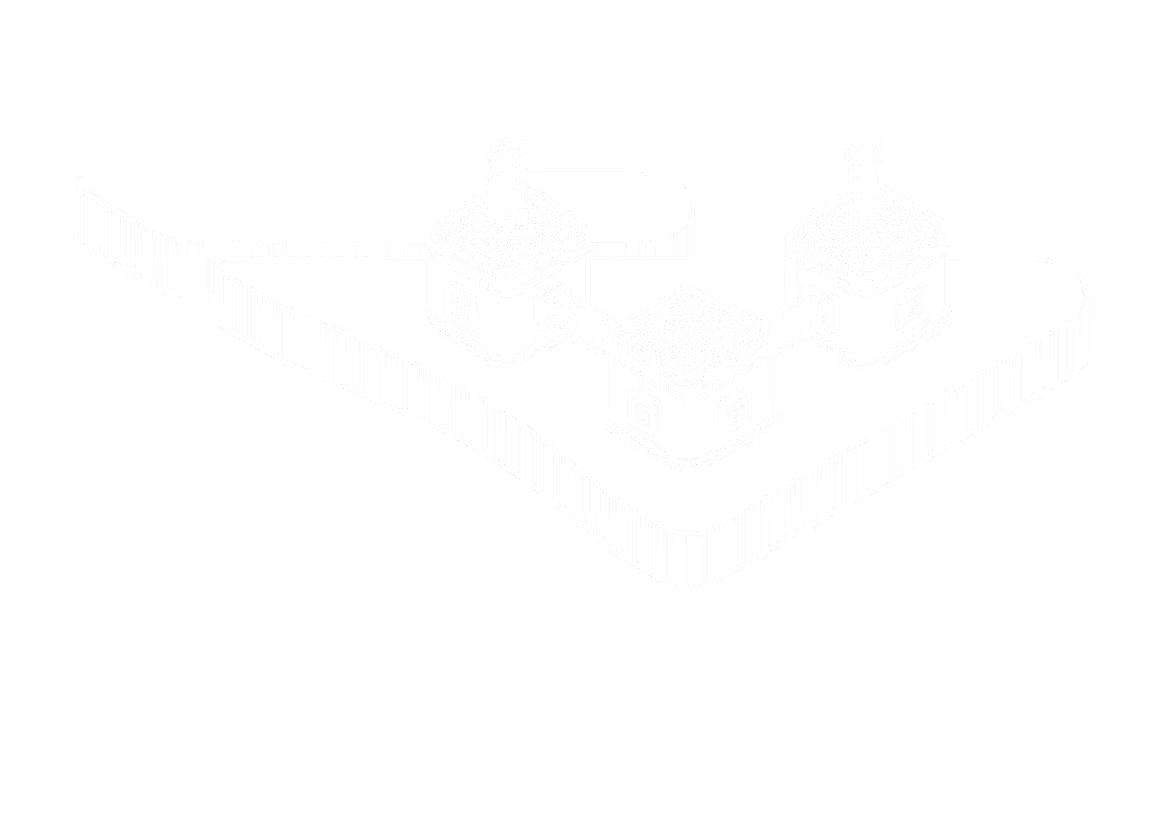

Inference Endpoints

Deploy and scale production inference with fully managed endpoints.

- Ship inference in minutes. No clusters, GPUs, or infrastructure to operate

- Scale from prototype to production with low-latency and high throughput

- Meet data compliance requirements with strict customer isolation

Fine-Tuning

Customize foundation models to your enterprise data with low-friction fine-tuning.

- Fine-tune models with your own data to align behavior, accuracy, and outputs

- Lower the cost and complexity of fine-tuning through a streamlined workflow

- Move tuned models into production in a repeatable, governed flow

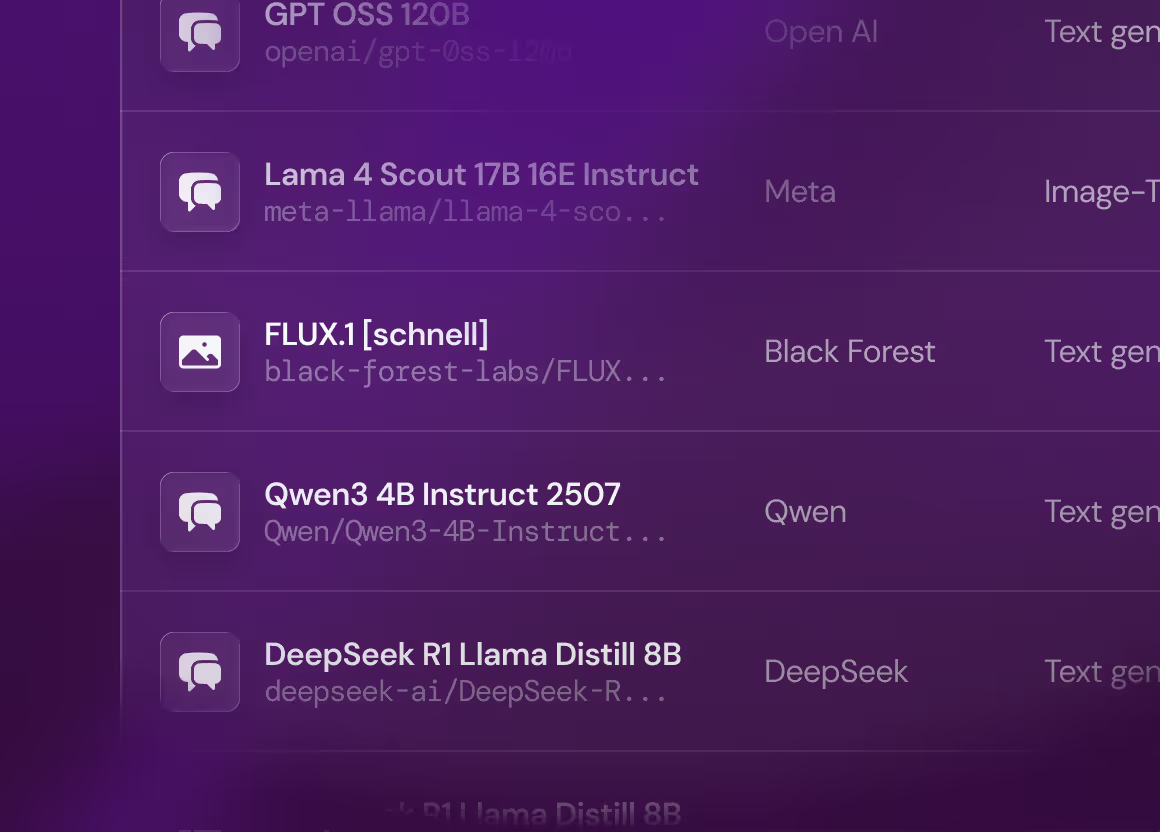

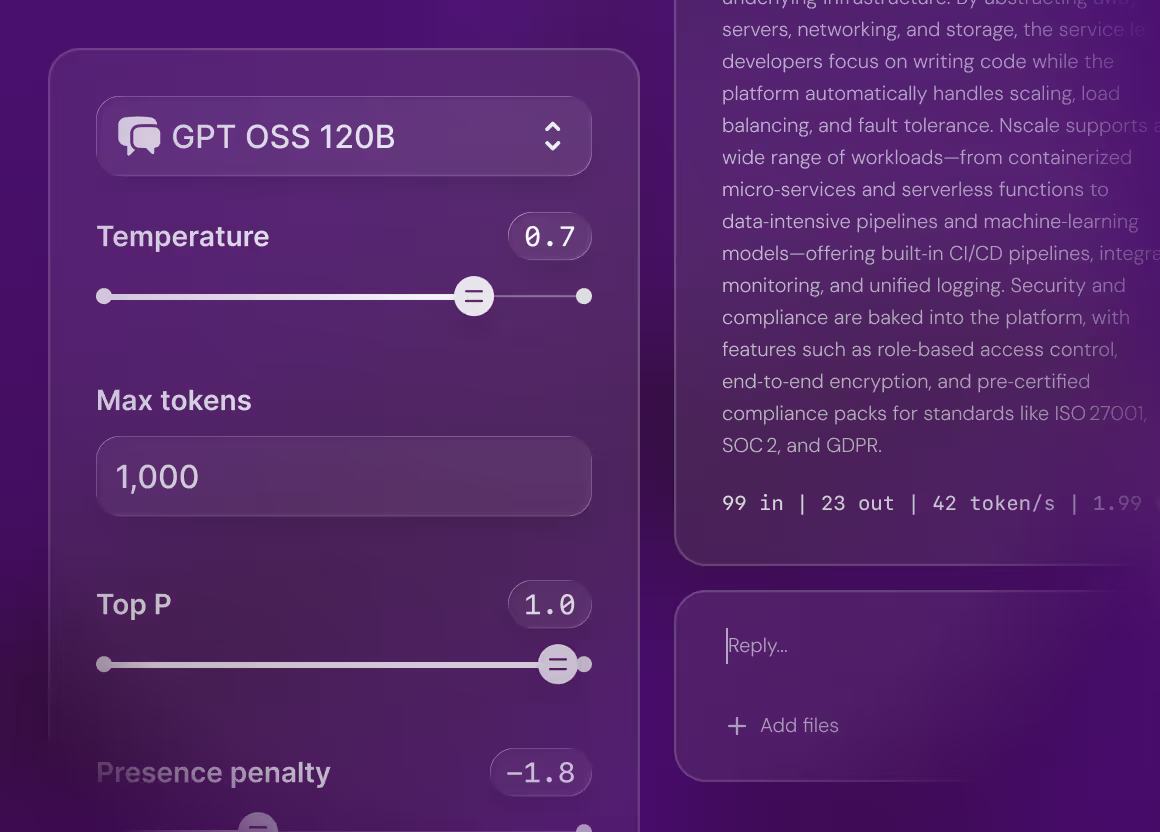

Prompt Workbench

Make prompt engineering reproducible, collaborative, and production-ready.

- Bring structure to prompt engineering with repeatable experiment runs

- Reduce trial-and-error cost and time-to-prototype without burning GPU hours

- Move seamlessly from experimentation to production

AI services built for production

Experiment faster

Accelerate prompt iteration and tuning in a browser workbench with versioning and direct usage with inference endpoints.

Scale with confidence

Run serverless, autoscaling inference on Nscale-managed GPUs with integrated observability and strict data boundaries.

Ship reliably

Combine reproducible prompts and fine-tuning with managed inference, monitoring, and versioning to deliver predictable, production-grade AI at scale.

Use the most popular and best-performing models

The Nscale Production Engine

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Suspendisse varius enim in eros elementum tristique. Duis cursus, mi quis viverra ornare, eros dolor interdum nulla, ut commodo diam libero vitae erat. Aenean faucibus nibh et justo cursus id rutrum lorem imperdiet. Nunc ut sem vitae risus tristique posuere.

Access thousands of GPUs tailored to your needs

.png)