Production-grade orchestration for AI

Accelerate developer velocity and improve reliability by combining production-ready Kubernetes, an HPC-grade Slurm scheduler, and managed instances with multi-tenancy and enterprise observability.

Orchestration, high-performance, and reliability for AI workloads

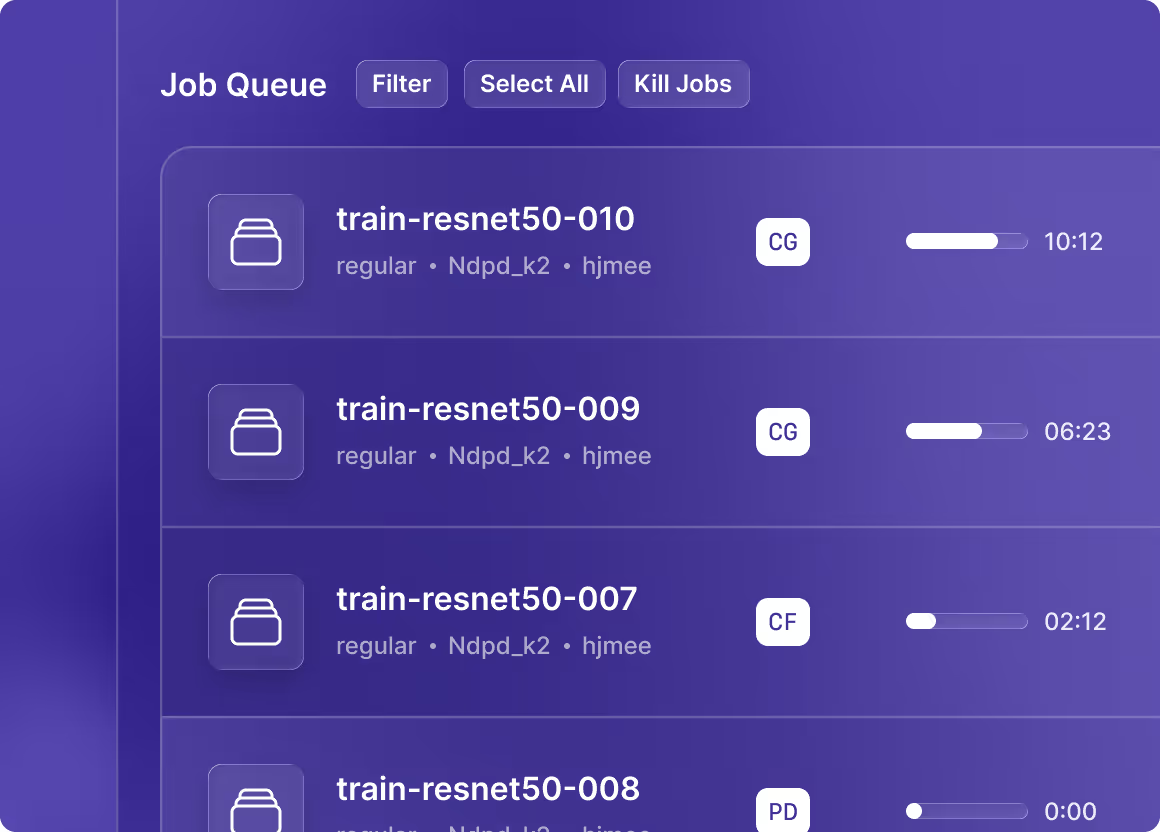

Managed Slurm

Make large GPU training runs manageable with an HPC-grade Slurm batch scheduler that runs on Kubernetes.

- Create reliable R&D timelines with scheduled queues for large-scale training

- Simplify management of mixed workloads across applications

- Retain a familiar environment for HPC teams transitioning to AI

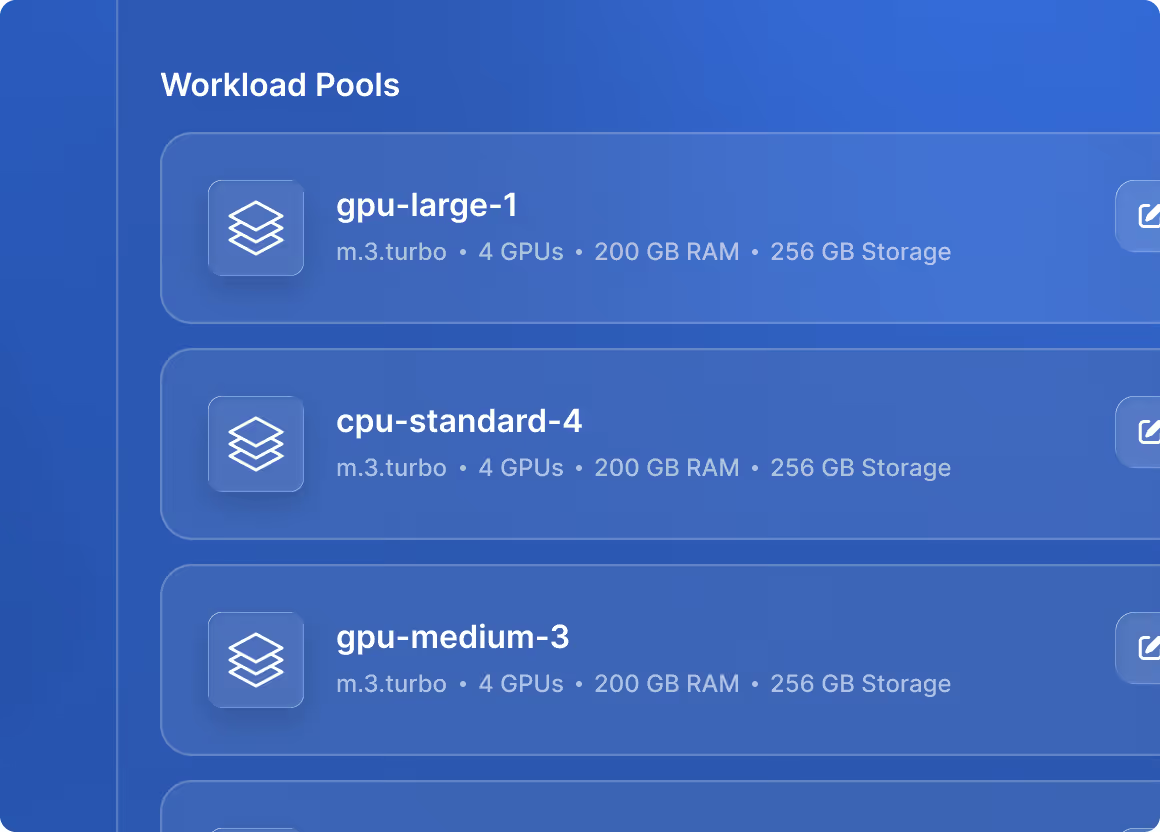

Nscale Kubernetes Service (NKS)

Run production-ready Kubernetes and lightweight, virtual Kubernetes clusters for a range of workloads and experiments.

- Take full control of Kubernetes with fast spin-up, multitenancy isolation, and failure recovery in a production-ready orchestration environment

- Reduce time-to-market and operational bottlenecks with virtual Kubernetes clusters — provisioned in minutes

- Scale with ease to enterprise-grade super-clusters

Instances

Remove hardware lifecycle complexity with compute flexibility that fits your needs.

- Maximize performance for intensive workloads with managed bare-metal nodes

- Stay fast with Virtual Machines for experimental workloads

- Keep data and network sovereignty with VPC isolation

Unified platform services for optimized runs

Shorten cycle times

Spin up developer test environments in minutes and move experiments to production with GPU-aware scheduling and autoscaling.

Reduce operational bottlenecks

Remove complexity and reduce outages with intelligent GPU placement, orchestration, and observability.

Provide predictable budgeting

Spin up bare metal or VMs with lifecycle-managed instances to simplify capacity planning and costs.

The Nscale Production Engine

Lorem ipsum dolor sit amet, consectetur adipiscing elit. Suspendisse varius enim in eros elementum tristique. Duis cursus, mi quis viverra ornare, eros dolor interdum nulla, ut commodo diam libero vitae erat. Aenean faucibus nibh et justo cursus id rutrum lorem imperdiet. Nunc ut sem vitae risus tristique posuere.

Access thousands of GPUs tailored to your needs

.png)