Experience lightning-fast inference with Nscale Cloud's seamless integrations with the latest AI frameworks including TensorFlow Serving, PyTorch, and ONNX Runtime.

Nscale Raises $2 Billion in Series C — the Largest in European History

See More

We offer GPU-accelerated nodes designed for efficient AI and Machine Learning Inference at competitive prices. Our experienced team at Nscale manages system optimisations and scaling, allowing you to focus on the science instead of infrastructure administration.

We offer GPU-accelerated nodes designed for efficient AI and Machine Learning Inference at competitive prices. Our experienced team at Nscale manages system optimisations and scaling, allowing you to focus on the science instead of infrastructure administration.

Nscale’s cutting-edge model optimisations and simplified orchestration and management features, guarantee quicker results and enhanced performance while maintaining accuracy.

Experience lightning-fast inference with Nscale Cloud's seamless integrations with the latest AI frameworks including TensorFlow Serving, PyTorch, and ONNX Runtime.

Simplified resource management with automated orchestration and scheduling using Kubernetes and SLURM.

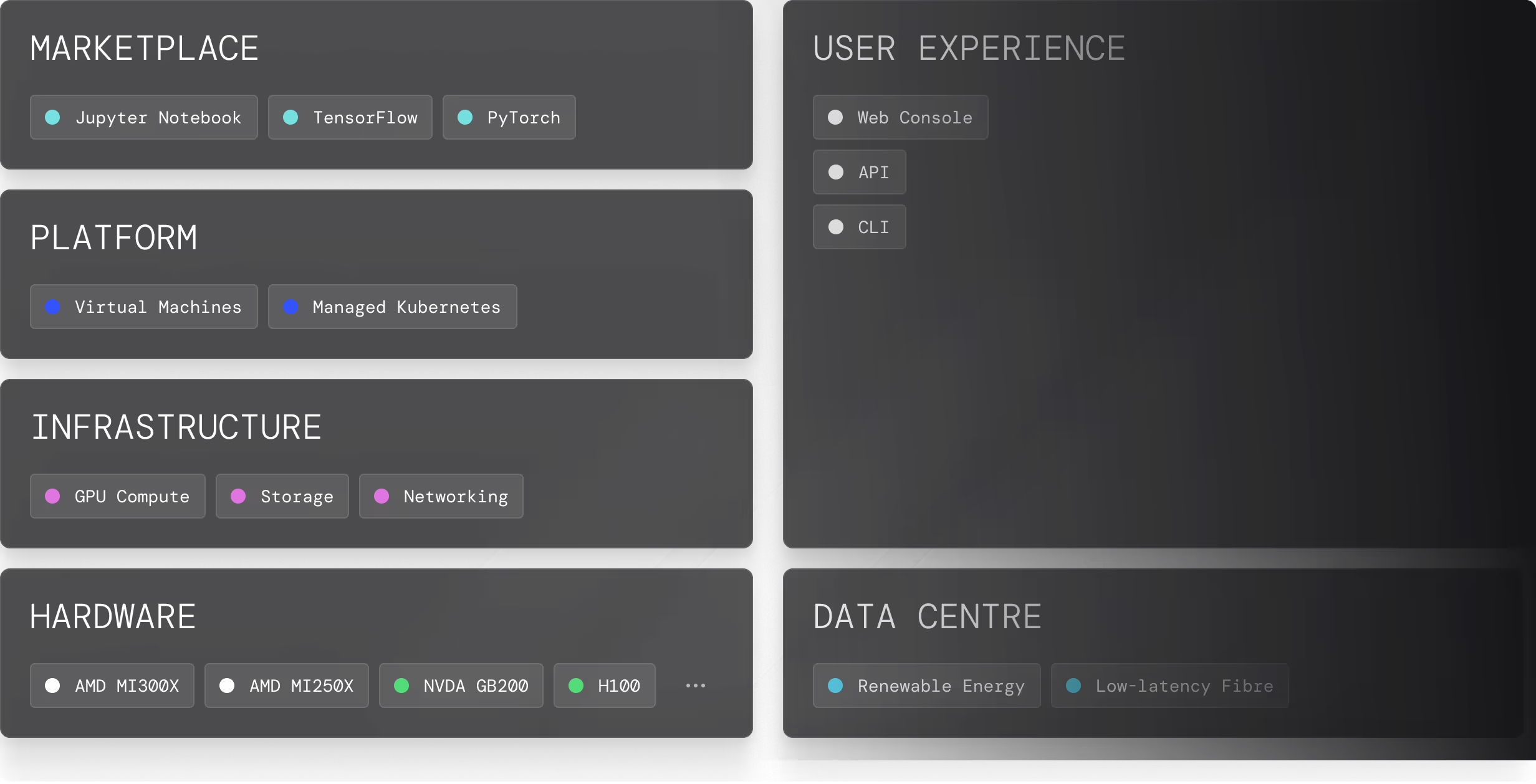

Nscale provides a complete technology stack for running intensive inference workloads in the most efficient and high-performing way possible.

Nscale accelerates the journey from development to deployment, delivering faster time to productivity for your AI initiatives.

Nscale owns and operates the full AI stack – from its data centre to the sophisticated orchestration layer – and this allows Nscale to optimise each layer of the vertically integrated stack for high performance and maximum efficiency. Our aim is to democratise high-performance computing by providing our customers with a fully integrated AI ecosystem and access to GPU experts who can optimise AI workloads, maximise utilisation and ensure scalability.

Nscale offers a variety of GPUs to meet different requirements, including NVIDIA GPUs. Our lineup includes models such as the NVIDIA A100, H100, and GB200. These GPUs are optimised for a range of workloads including AI and ML Inference.

Nscale is committed to environmental responsibility, utilising renewable energy sources for our operations and focusing on sustainable computing practices to minimise carbon footprints.

Our AI inference service leverages cutting-edge GPUs, optimised for both batch and streaming workloads. With our integrated software stack and orchestration using Kubernetes and SLURM, we provide unmatched performance, scalability, and efficiency.